TL; DR

With a virtual router (here VyOS), and proper routing table, it is possible to implement VMware Tanzu on private networks without NSX-T.

The premise

I needed to evaluate VMware Tanzu basic capabilities with CSI driver for PowerScale. To install Tanzu, also named Workload Management in vCenter, I followed that video and these 1, 2, 3 posts.

As a prerequisite, Tanzu needs two networks at least, one for the management cluster (also named Supervisor cluster) and one for the workload (i.e. the on-demand clusters to run workload).

In my lab, I only have one routable VLAN on the network with limited IPs. For other activities, like Anthos validation, I use a single private network behind a NAT ; unfortunately that configuration is not enough here.

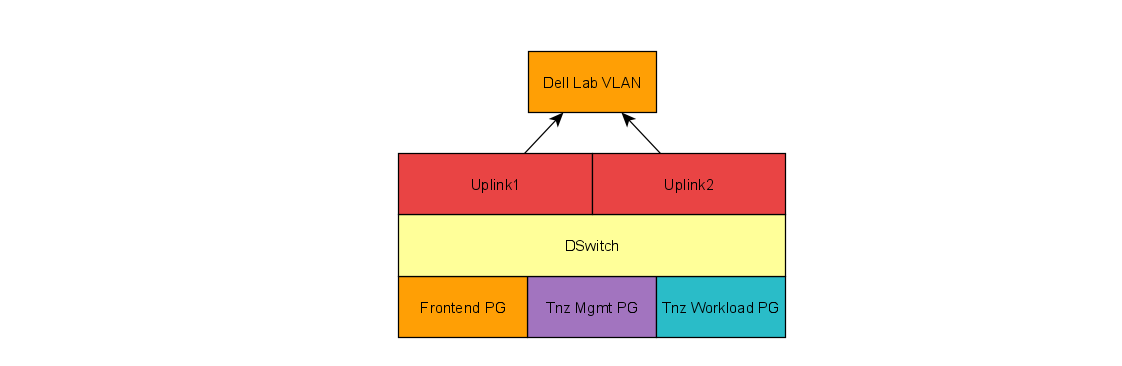

For Tanzu, I decided to use three networks:

- The frontend network (

10.247.247.0/24), which is routable to the external world and will have the Load-Balancer VIP - The management network (

10.0.0.0/24), for the vSphere management of the supervisor cluster - The workload network (

10.0.1.0/24), to host all Tanzu clusters

The problem is, I do not have an NSX-T license to manage these private networks. Luckily for us, once again Linux is here to save the day.

The implementation

The trick here is to use a virtual machine that will act as a router for the different networks. My choice went to VyOS, a Debian-base distro design for routing. It can be used as a firewall, VPN, do QoS, etc. The configuration is done with commands similar to what you can have with Cisco IOS or other proprietary networking devices.

In this case, I use only the routing and NAT features. For free, VyOS will route between the connected interface, so after the NICs configuration I just had to configure the NAT for my two private networks.

The final configuration is pretty much:

With that configuration:

- any VMs deployed with Tanzu can connect to the external world through the NAT via the Dell LAN segment

- any VMs with a single NIC can talk to the same segment via the DSwitch or to the other network via the VyOS VM

Ping issue

Tanzu explicitly states that Supervisor and Workload clusters must be able to connect to the HA Proxy data plane.

On my setup I used VMware HA Proxy and deployed the image with three networks. The Data plane API management listens on the Management network and default port of 5556.

Supervisor cluster deployment went well but during the workload cluster creation I got the following error:

unexpected error while reconciling control plane endpoint for my-cluster: failed to reconcile loadbalanced endpoint for WCPCluster csi/my-cluster: failed to get control plane endpoint for Cluster csi/my-cluster: Virtual Machine Service LB does not yet have VIP assigned: VirtualMachine Service LoadBalancer does not have any Ingresses

As a matter of fact I could :

- ping any IPs from the Load-Balancer

- ping the Load-Balancer IP from a node on the same network

- ping any IP Supervisor IP from the workload node and the other way around

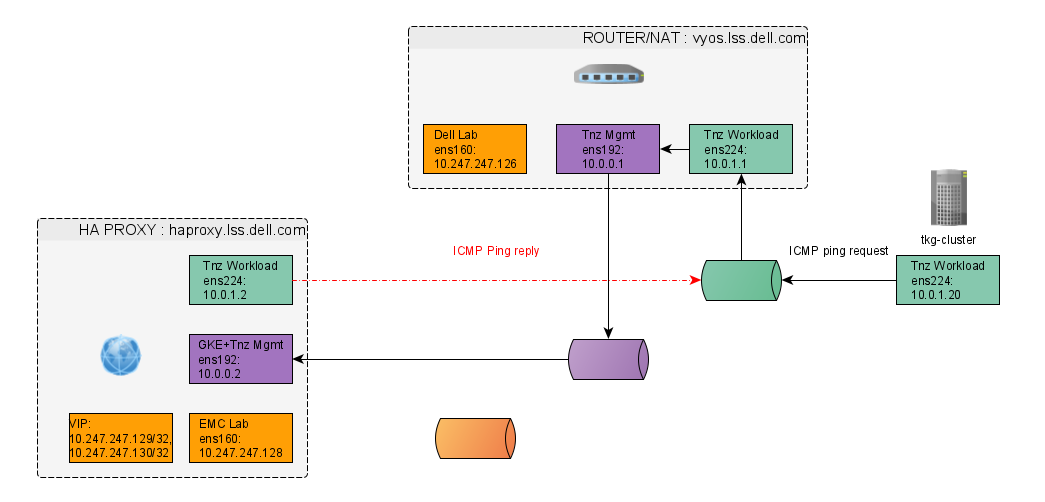

- but I could not ping a Management proxy IP which from a workload node

The problem here the ICMP echo requests goes though the router but the response is issued directly on the DSwitch :

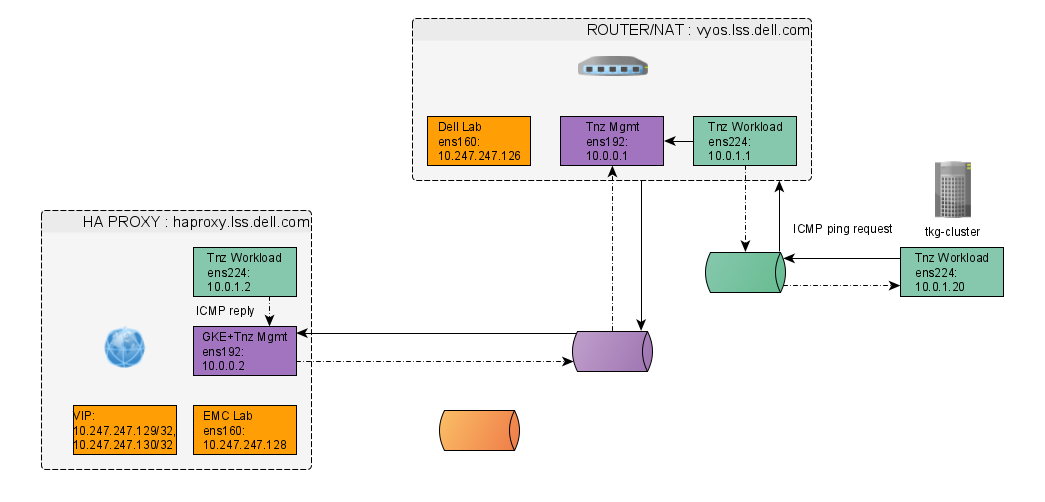

To solve that, the trick is to make sure all the request to HAProxy are answered through the router. To do so there are three things to do :

- Set the workload IP with a

/32mask to force to use this communication for this device only in :/etc/systemd/network/10-workload.network - Update the gateway of the Management NIC to for the two networks management & workload in :

/etc/systemd/network/10-management.network - Add a default route for the external work with a higher weight in :

/etc/systemd/network/10-frontend.network

Below are the NICs configuration you can edit then apply with a systemctl restart systemd-networkd

Now the ICMP echo reply goes through the same route and responds :

DNS

Tanzu nodes need to be able to resolve the DNS names of each VMs on the private networks.

VyOS does not come with DNS service. So I went with dnsmasq on the Load-Balancer component.

VMware’s HAProxy runs on PhotonOS which uses rpm packages. To install dnsmasq run : yum install dnsmasq.

The dnsmasq.conf config is straight forward ; it enforces to listen on the workload network (line 1 & 2), uses the entries in /etc/hosts for the name resolution (line 3), does not forward the requests on hosts from the private network (line 4) and log the queries (line 5):

I used a small ruby script to create the full range of /etc/hosts entries :

ruby cook-etc-hosts.rb > hosts

ruby cook-etc-hosts.rb 10.0.1. tnz-workload- >> hosts

Conclusion

For basic features (like NAT, static or dynamic routing, NAT, DHCP, Firewall, etc.) VyOS is an excellent virtual router that can get you a long way if, like me, you miss NSX-T license.